The human-AI crossroad

The topic of human agency in the age of AI is at its hottest, with reports of over 10,000 job cuts in the U.S. in 2025, all of which were directly linked to automation. Entry-level roles have been hit hardest as their jobs often handle repetitive tasks that can be easily automated. At the same time, “AI fluency” is becoming a distinguishing factor for employment.

Brynjolfsson et al. (2023, NBER) reported a 14% productivity gain in call centres with the largest uplift seen amongst the least experienced human agents. Additionally, Noy & Zhang (2023) found that ChatGPT substantially raised productivity in writing assignments, decreasing time-to-output by 40%, with quality rising by 18%. The proven results are hard to resist.

However, in a recent discussion between Dr Jay van Zyl, our founder, and Dr Puleng Makhoalibe, AI strategist, consultant, and founder of Alchemy Inspiration, it became clear that businesses are being blinded. Ultimately, if AI becomes more harmful to human beings than it is beneficial, it is not a ‘tool’, as it claims to be, but rather an end in itself.

The consequences of removing human agency:

- Loss of accountability and recourse: When the system makes the final decision and no person is truly responsible, harmed users have no clear path to appeal or seek redress. Most emerging regulations (EU AI Act, NIST AI RMF) require “meaningful human oversight” precisely to preserve accountability, explainability, and the right to contest decisions.

- Brittleness in the real world: Models perform well on average cases but fail on long‑tail edge cases and shifting contexts. Humans carry tacit knowledge about exceptions, norms, and context that data often misses. Fully automated decisions amplify small model errors into large systemic failures.

- Objective misspecification and Goodhart’s law: Optimizing narrow proxies (clicks, cost, speed) without human judgment leads to goal misalignment and perverse outcomes. Human review is often the only check that values like fairness, dignity, and safety are being met.

- Automation bias and de-skilling: If humans become reduced to rubber‑stamping AI output, they become less capable of catching errors over time. This erodes organizational resilience and ultimately deskills the workforce.

Why ecosystem.Ai cares about the human

Jay has always been interested in understanding how things work. In the discussion with Puleng, he reflected on his time at school, when he had a stutter and was exempt from doing any form of public speaking. Instead, it was the language of technology that became his primary way of engaging with the world. He loved its predictability, the comfort of knowing that what he told it to do, it would do. He became convinced that technology would “solve all the world’s problems” – if humans just cooperated.

An early design of the ecosystem.Ai platform, with the human at its centre.

Then, one day in the 90s, around the time Jay had started his postgraduate degree, he woke up and realized one complication: the uncompromising, stubborn, soft-shelled, two-legged, breathing, feeling and thinking human that existed at the centre of the system.

The reality is that technology functions in a human-dominated system. And this organism doesn’t do what it’s supposed to do. When it sees an if statement, it blatantly ignores it and does its own thing instead. Every decision and action the human takes is about how it feels in the moment, or what it thinks might happen next (usually based on some irrational logic).

Jay’s interest in computational social science blossomed from this. He embarked on a journey of developing technology that could see the human through data points, that could encompass the emotional nuance driving their decisions, and take into account the impact of context in real time. ecosystem.Ai holds the concept of ‘the human in the loop’ at its core – building the product around the human, rather than in opposition to it.

Ecogentic takes behavioral tendencies into account throughout customer journeys. Notably, our AI agent builder does not aim to replace the human agent in customer engagement, but rather deduces when human intervention is best suited. Our intent detection engine, backed by behavioral science, recognises when a customer needs access to nuanced human thinking, but takes over repetitive tasks. Ultimately, this leaves human agents to do work that is more stimulating and suited to their skills – leaving them more satisfied.

Human agency on a spectrum

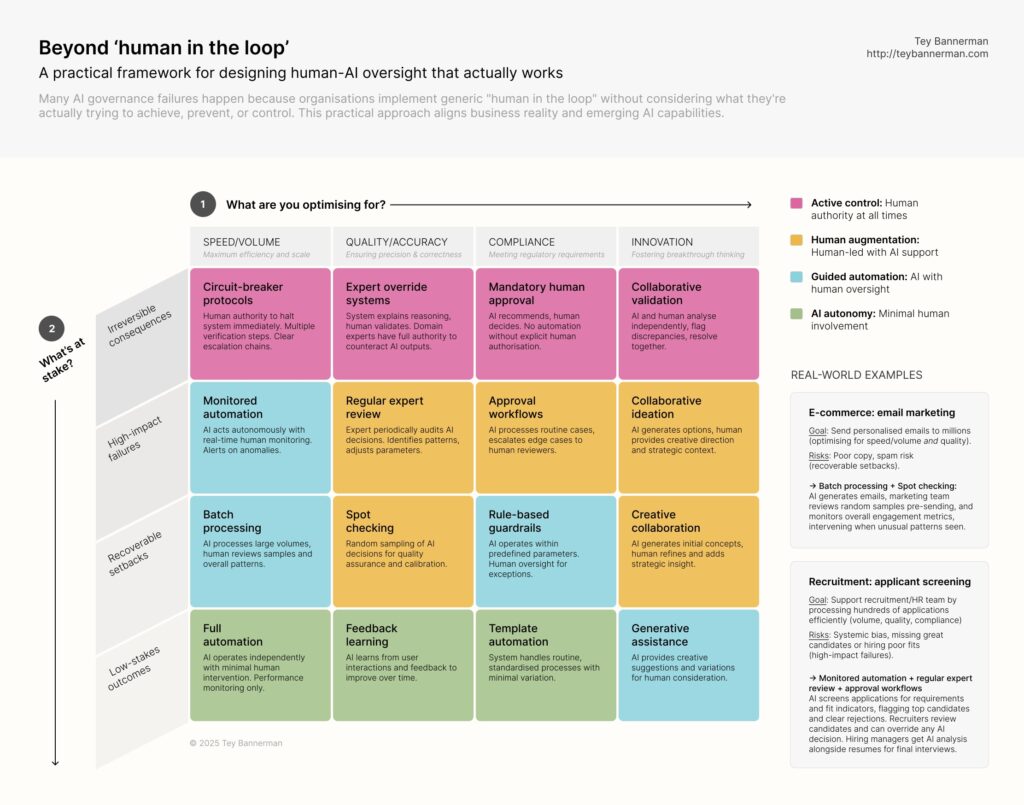

Tey Bannerman, AI strategist and ex-McKinsey Partner presented a framework to implement AI in a practical way, that complements humanity. In a LinkedIn post, he pointed to the need for “intentional human-AI oversight design”. Rather than blindly implementing AI capabilities in areas where human beings would otherwise thrive, he put together a framework for effectively implementing the human-AI duo.

A framework that accounts for human agency in AI implementation, by Tey Bannerman.

AI adoption requires nuanced thinking and, most importantly, an educated approach from business leaders. By beginning with the question “What am I optimizing for?”, you force your AI strategy to align with real business goals. Then, by looking at “What’s at stake”, you can reduce the risk of being blinded by the shine – seeing beyond flashy AI capabilities and getting into the nitty gritty of the risks that exist.

In the words of MIT professor, David Autor, “We do see reduced hiring at firms adopting AI in some of the tasks that AI is good for — information processing, some software coding, decision-making tasks. But I don’t think that is in any sense a full description of what’s going to occur. It’s actually a challenge of job design to figure out how we reallocate and redesign work, given the tools we now have available. […] The good case for AI is where it enables people with foundational expertise or judgment to do more expert work with less expertise.”

Ultimately, implementing AI that will be truly beneficial for businesses comes down to a “design choice”. Work, as we know it, needs to be reimagined. Just as AI should not be implemented in opposition to humans, so too do humans have to embrace what its capabilities mean for the future, and create a system that keeps the human in the loop.